Update: The paper is now published and can be downloaded from the journal webpage.

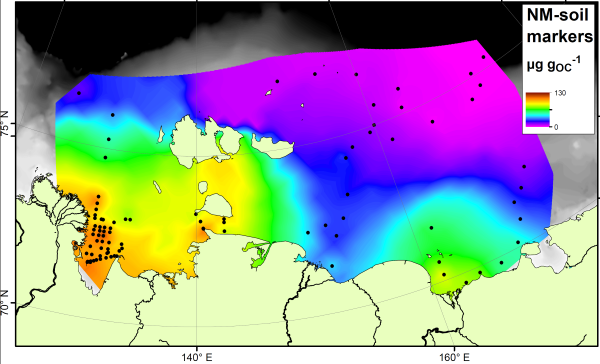

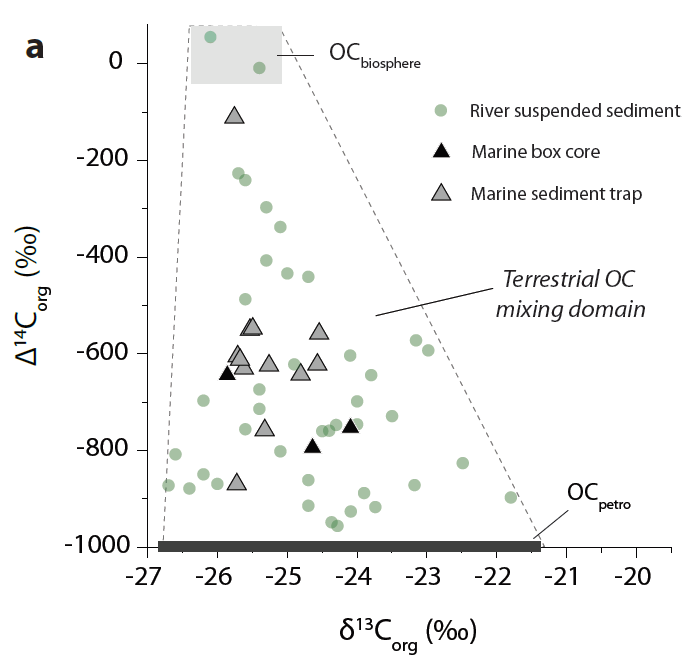

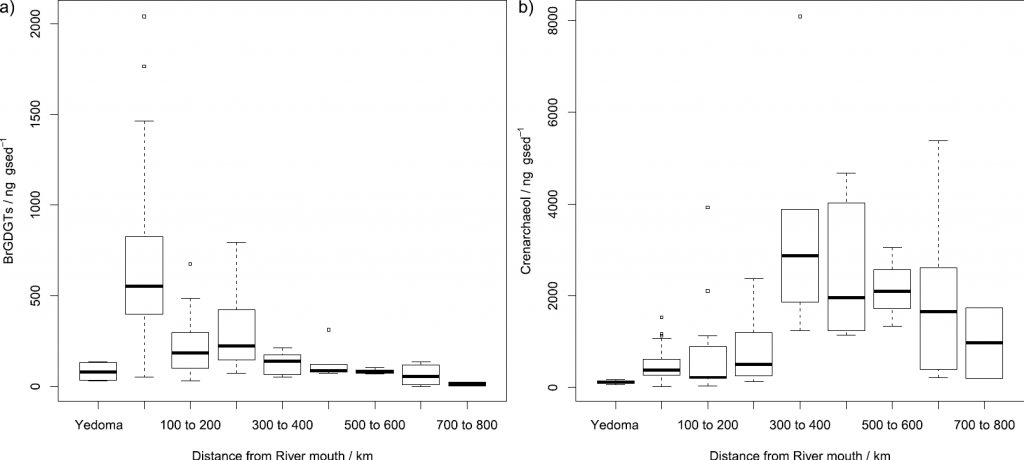

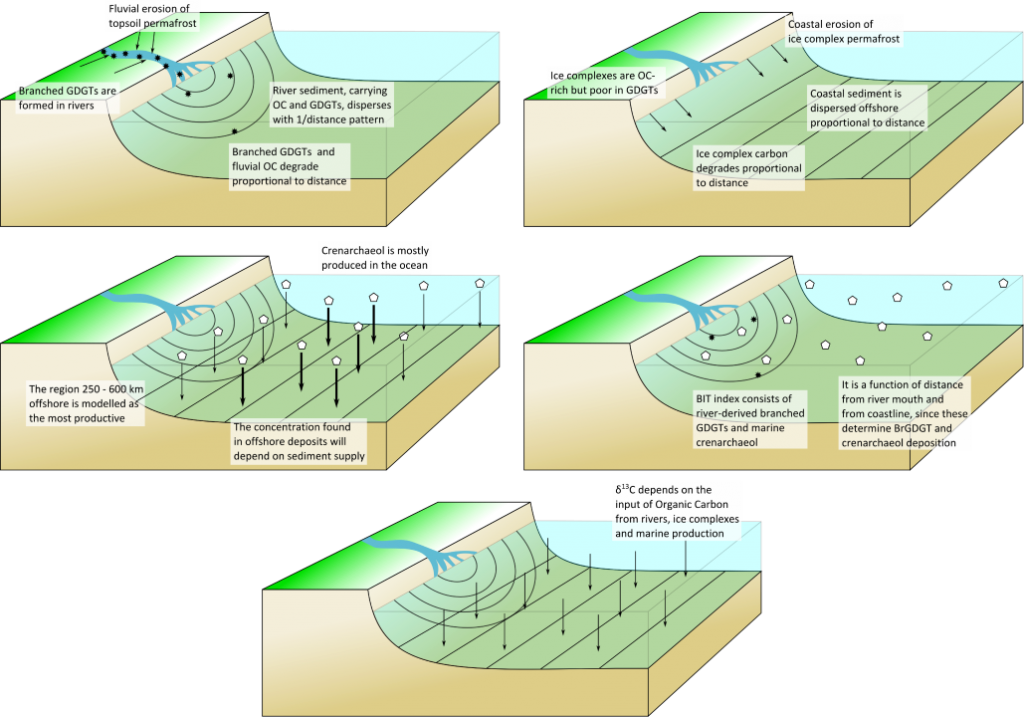

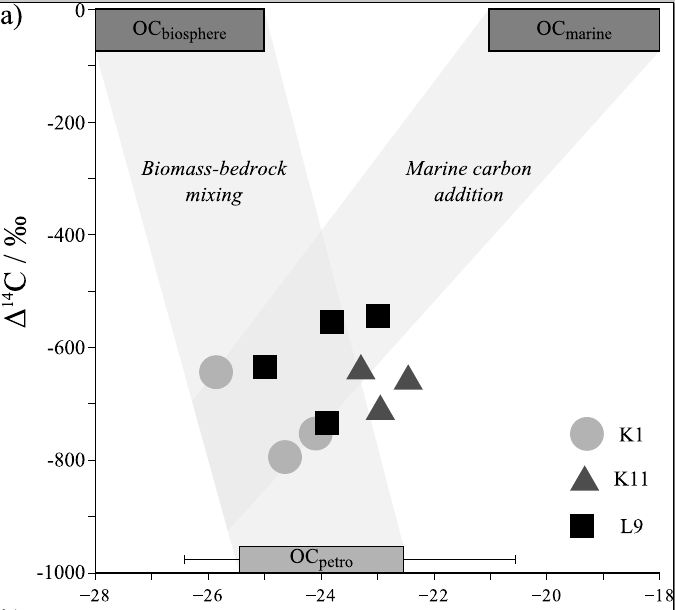

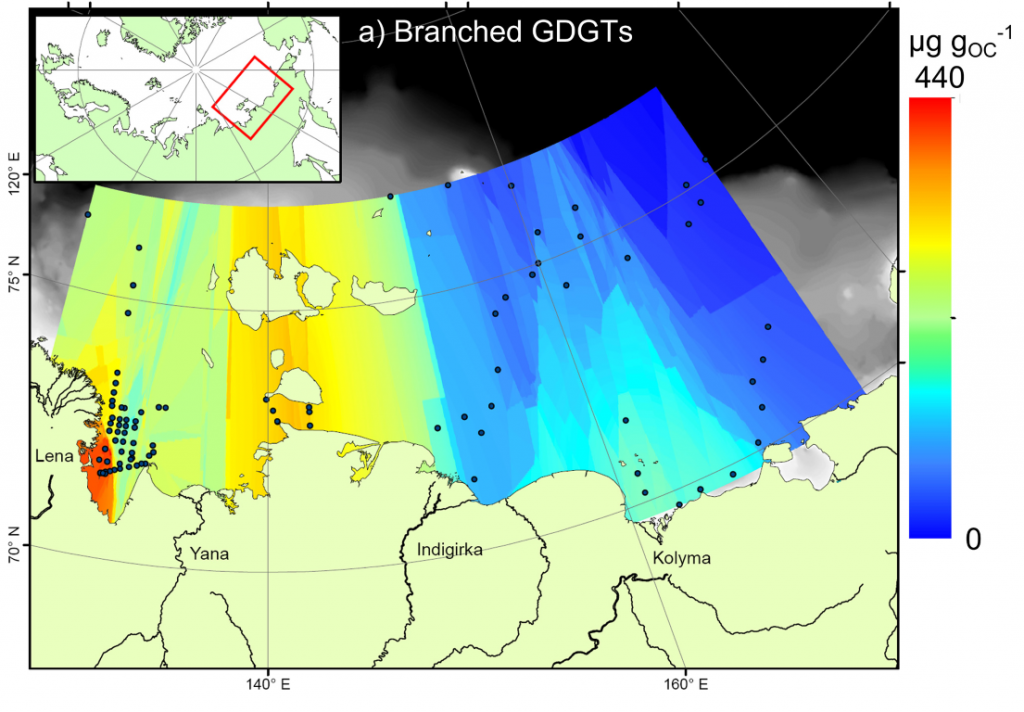

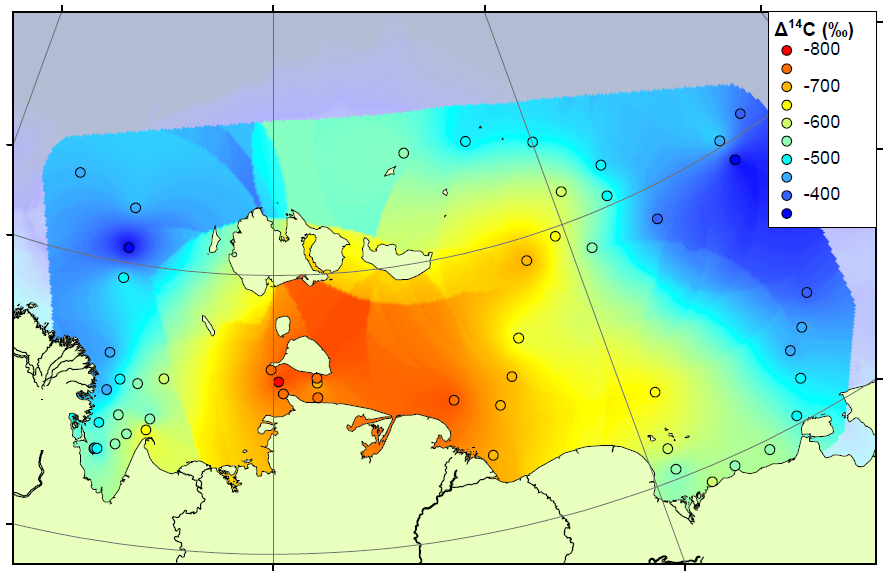

The study continues our work on the East Siberian Arctic Shelf, and contains two new datasets. The first is a radiocarbon study, measuring the age of organic matter on the shelf using carbon dating (see map above). By measuring the age, we can determine whether the carbon has come from the ocean (very young), the topsoil (quite young) or the coastal permafrost (thousands of years old). We combined our results with those already measured on the shelf to form the most complete radiocarbon map for this area. The high-resolution map shows that areas close to the shore and away from the major rivers are home to very old carbon, almost certainly sourced by erosion of old permafrost cliffs. Elsewhere on the shelf, the carbon is younger but not as young as modern topsoils or ocean carbon. Therefore the coastal erosion carbon is having an influence right across the shelf.

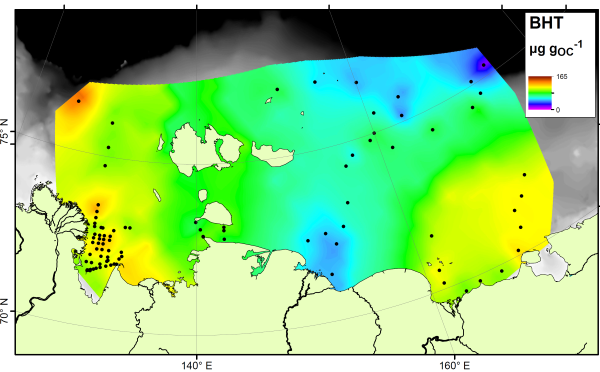

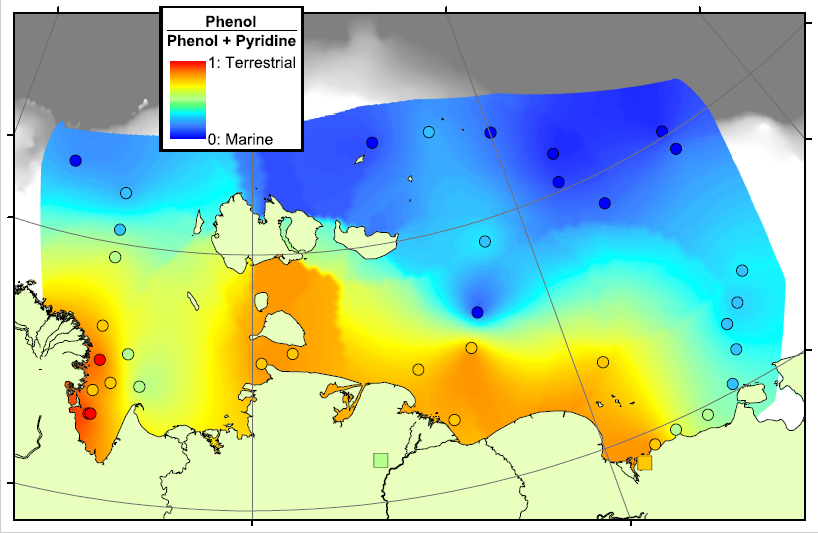

Our second technique is pyrolysis GCMS, where samples are smashed into small pieces using high temperatures and the small pieces are then analysed using GCMS. This technique generates a large amount of small pieces, too many to analyse each one individually, and so we decided to concentrate our efforts on a few target molecules. These included Phenols, which are probably sourced from lignin, a major component of land plants, and Pyridines, which are nitrogen-containing compounds probably sourced from proteins. We think that a lot of the Pyridines in the Arctic Ocean will come from organisms living in the ocean itself, and therefore the Pyridines are a potential tracer for marine organic matter. By comparing the concentrations of Phenols and Pyridines, we can estimate the amount of terrestrial and marine organic carbon in a sample.

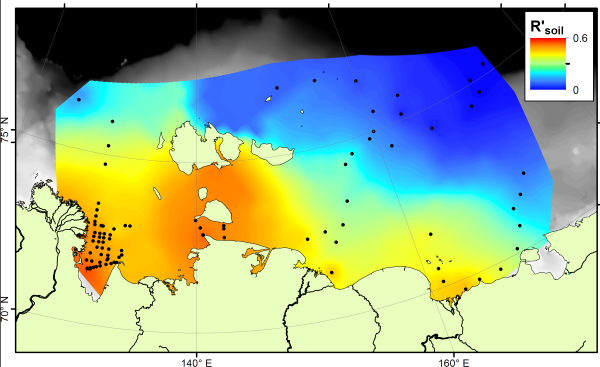

In the map above, red areas are dominated by Phenols and are therefore rich in terrestrial carbon, blue areas are dominated by Pyridines and are therefore rich in marine carbon. This pattern matches very well with our previous work in the region, showing that there is a transition from terrestrial to marine conditions across the Arctic Shelf, and that the transition zone lasts for hundreds of kilometres offshore. This means that there is a lot of terrestrial carbon being deposited, and hopefully buried, on the shelf, rather than all of the eroded carbon being degraded and released as CO2.

The Cryosphere, 10, 2485-2500, 2016

https://doi.org/10.5194/tc-10-2485-2016